When Might we Expect Artificial General Intelligence? Why?

An AI that is able to do everything a human is able to do seems far off. But when do AI experts think this will be developed, and why?

‘Between 2045 and 2090’, says a literature review of forecasts made by AI safety researchers. This is really important— it seems likely that the world will look very different once Artificial General Intelligence (AGI)1 is developed, and experts think this might come within a lifetime.

We’ll look at examples of forecasts from two key categories: ‘judgement-based’ and ‘model-based’ forecasts. Judgement-based forecasts involve asking people their opinion, like experts in different areas. Model-based forecasts involve a specific ‘model’; they think concretely about things that have happened in the past and base their predictions on things that could happen in the future.

We can think about these like two different ways of forecasting the weather. You might look outside at the cloud coverage and your ‘feel’ for the day, and use your judgement to decide (or ask a friend’s judgement who you know is good at guessing the weather). Alternatively, you might use a mental model about when it’s previously rained in this area at this time of year etc. in the past2.

Judgement-Based Forecasts

There are two fundamental types of judgement-based forecast. The first is easy to understand: it’s a survey of experts. One example is the AI impacts survey, which asked 738 researchers who had published papers at AI conferences to answer questions on AI. The average3 date given in the survey for when AGI would be developed was 2059.

The second type of judgement-based forecast is a little trickier, and involves ‘prediction markets’. Prediction markets are based on the old concept of the ‘wisdom of crowds’— when many people make guesses about something, their combined guess is much better than any individual guess, because different people have different pieces of information, and errors tend to cancel each other out.

Prediction markets use this concept to make predictions about various things by asking people to say when they think something is likely to happen (or how likely they think it is to happen). An example of a prediction market for AGI is on Metaculus, a prediction market website. The current combined best guess on Metaculus is that AGI will be developed in 2032. It’s important to note that anybody can answer the Metaculus prediction market, not just AI experts.

Model-Based Forecasts

Model-based forecasts are much harder to summarise as a group, because they involve specific models about the world and what things are relevant to development of AGI. In general, they rely on forming ‘priors’; expectations of when we might expect AI based on things that don’t require any detailed knowledge of AI progress, like guessing the weather based only on when it’s previously rained but without specific knowledge of local cloud formations and pressure etc. It’s useful to have these kinds of forecast as well as judgement-based forecasts because they’re a very different way of forecasting; we can see where they agree and disagree.

The Insight-Based AI Timelines Model is a tool that can be used to estimate timelines of AI based on how many ‘key insights’ are needed to develop AGI, and how many have already been discovered.

The idea here is that any technology requires a certain number of insights — key technological discoveries or new ideas which are necessary for that technology to be developed. They form their forecast by estimating how many are required for AGI, and using some basic historical data about how many insights are being found each year.

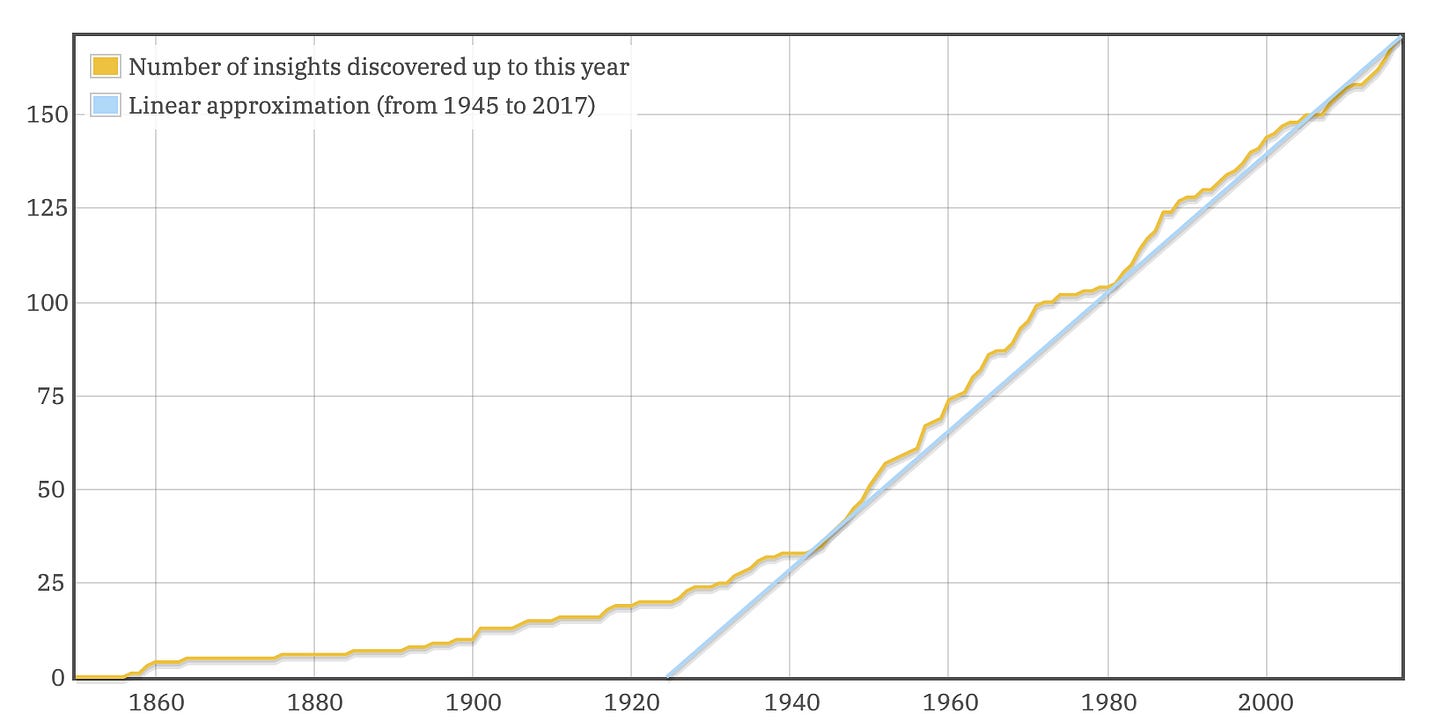

They look at such historical data and estimate that the number of insights being found each year is going up roughly linearly:

This is probably because more people are working on the problem as it becomes more apparent that it’s important, which causes more people to work on it, etc. They note that this could be a case of scientists finding ‘low-hanging fruit’, in which case things will slow down again at some point. Their median estimate is later than the year 3,000, but they do say it’s around 15% probable that AGI will develop by 2100.

‘Forecasting TAI4 with Biological Anchors’ is a report from Open Philanthropy. The key thing the report does is:

Estimate how much computation the human brain does

Estimate how much computation would be required to train an AI that does that much computation (this is often much more than would be required to run that AI)

Look at trends in how much computing power has been available year-on-year in the past

Assuming that the trends in computing power continue, estimate when enough computing power will be available to train an AI that is the same size as the human brain.

The report also adjusts for improvements in the efficiency of training AI. The report also uses 5 other similar models based on biology, and the median estimate is that TAI (~AGI) will be developed in 2052.

What all these models have in common is that it’s at least plausible that AGI is coming soon — within a lifetime. This seems likely to have transformative effects on our lives and the world around us. Are we prepared for such a change?

The different forecasts use slightly different definitions of AGI. For a rough representation of what AGI means, I’ve described it as being ‘an AI that can do any task a human can do’.

You might also consult a formal weather forecast, but this could seem like consulting an expert (which would be judgement-based), or consulting a specific model (which weather forecasts are based on in reality), so I’ve ignored this to make the point clearer.

Median

‘TAI’ stands for ‘Transformative Artificial Intelligence’, meaning AI which causes societal and economic change on the scale of the industrial revolution. Here, we’ll assume this means roughly human-level AI.